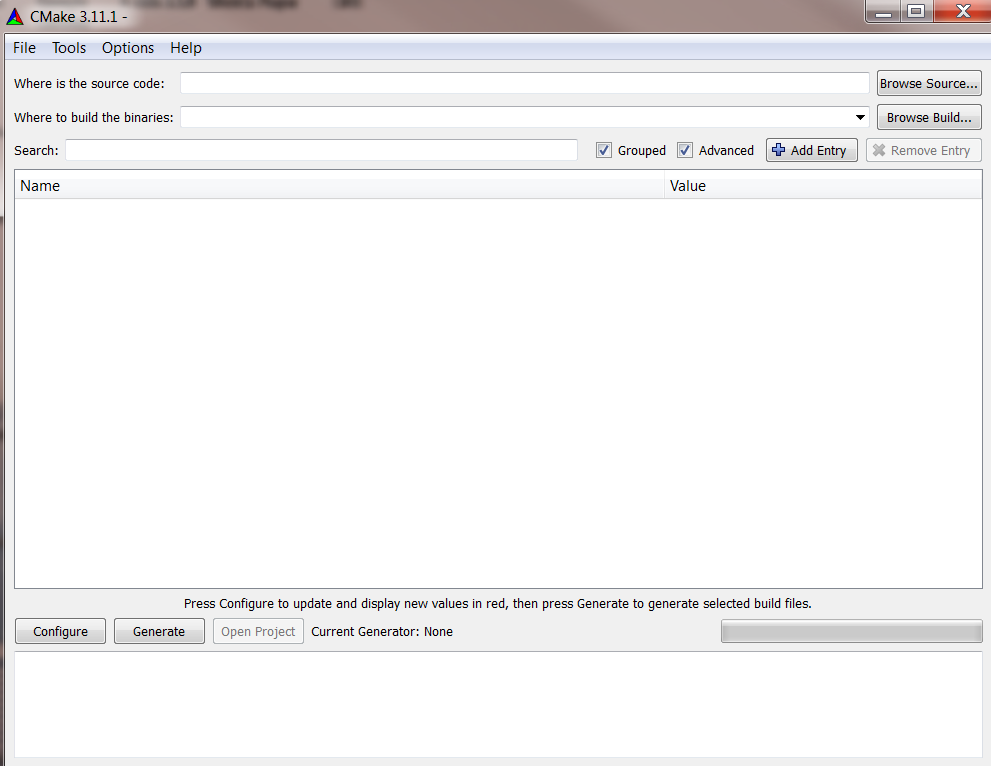

mingw-w64) so you can build a complete Windows software package (with installer if you like) from Linux, all driven from your Makefile. Nowadays, Linux distributions come with cross-compiling toolchains (e.g. With target-specific variables you can easily customize how you build for different platforms. Of course, you can also use it for larger projects, including those targeted for multiple platforms. Make (the tool or direct use of it via a Makefile) is not outdated, particularly for "small, personal projects" as you use it for. Ironically, the severe hardware limitations that caused all of this disappeared many decades ago and the only reason we're still using such an archaic mess now is " this is how it's always been done". It's my expectation that by the end of the century there'll be a few more layers of "work-arounds for work-arounds" on top of the existing pile. This is where we are now: work-arounds for a work-arounds for a work-around for the design flaws in the tools caused by limited hardware. To work around the problems with makefiles (which were a work-around for a work-around for the design flaws in the tools caused by limited hardware) people started experimenting with auto-generated makefiles starting with things like getting the compiler to generate dependencies and leading up to tools like auto-conf and cmake.

To work around the problems with scripts (which were a work-around for the design flaws in the tools caused by limited hardware) eventually people invented utilities to make things easier, like make. As a work-around for the design flaws in the tools (caused by severely limited hardware) people naturally started writing scripts to remove some of the hassle. Of course with many source files and many utilities being used to create many object files, it's a mess to build anything manually. Back then there were severe hardware limitations - there were no graphical tools so "plain text" ended up being used for the input file format computers typically had an extremely tiny amount of RAM so the source code had to be broken into pieces and the compiler got split into separate utilities (pre-processor, compiler, assembler, linker) CPUs were slow and expensive so you couldn't do expensive optimisations, etc. Once upon a time high level languages were just an idea. It always depends on how specialized your application is, and how well the structure of the CMake framework fits your work flow. However if you are working in such a standardized environment, CMake or any other of these modern script generator frameworks certainly are your best friends. Whenever you depart from them predefined procedures, you have to start hacking CMake just as you used to hack your own Makefiles.Ĭonsidering that you can create far more in C++ than just your average application for an system which knows ELF loaders, CMake surely isn't suited for all scenarios.

On the other hand, CMake is directly tailored towards generating generic ELF libraries and executables. Saying make was outdated, would be the same as saying writing custom linker scripts was outdated.īut what raw make doesn't provide, are extended functionalities and stock libraries for comfort, such as parametric header generation, integrated test framework, or even just conditional libraries in general. In the end, make is still powerful enough to provide all the functionality desired, like conditional compilation of changed source and alike.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed